Abstract

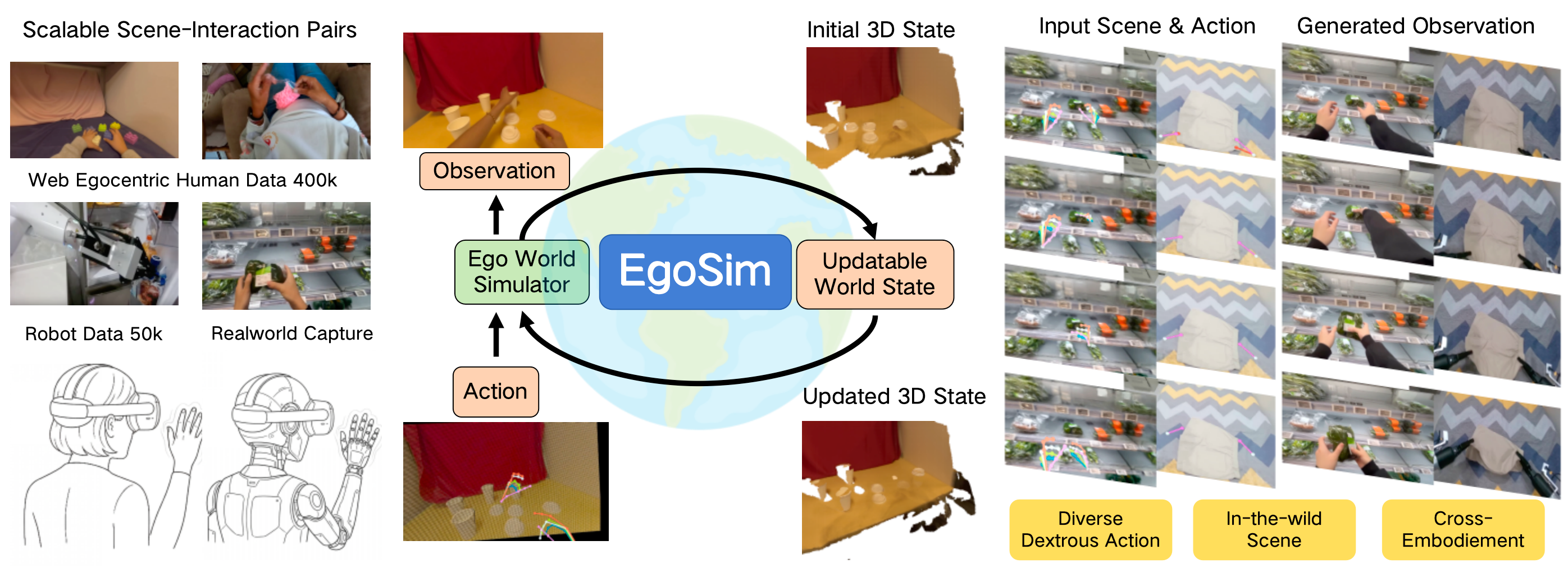

World simulators generate realistic synthetic observations based on an initial environment state and vivid actions of embodiments within the world. A generalized egocentric world simulator should be capable of generating diverse embodiment-object interactions with high spatial consistency across various real-life scenes. Additionally, it is critical to memorize and update the environment state from its generated observations to enable continuous simulation. To address these challenges, we propose EgoSim, an egocentric world simulator that generates high-quality interactions via dexterous action inputs, while incorporating an updatable interaction-aware 3D state to support continuous simulation. For improved generalization, we design a scalable data pipeline that extracts high-quality scene-interaction pairs from in-the-wild egocentric videos. Extensive experiments demonstrate that EgoSim outperforms existing methods in interaction quality, diversity, spatial consistency, and generalization, while also achieving continuous generation ability.